| GPU Name Persistence-M| Bus-Id Disp.A | Volatile Uncorr. | GPU GI CI PID Type Process name GPU Memory |

| Fan Temp Perf Pwr:Usage/Cap| Memory-Usage | GPU-Util Compute M. Notably, since the current stable PyTorch version only supports CUDA 11.1, then, even though you have installed CUDA 11.2 toolkit manually previously, you can only run under the CUDA 11.1 toolkit.

But I can't find any other cuda installs, and apparently neither can faceswap sysinfo.Ĭode: Select all Sat Jun 25 21:28:54 2022 One thing that's really strange is when I run "sudo nvidia-smi", it does display a CUDA version, and it's not the version installed by faceswap (which is 11.2 iirc). # packages in environment at /home/(((redacted_username)))/miniconda3:Ĭonda-package-handling 1.8.1 p圓9h7f8727e_0 Typing_extensions file:///opt/conda/conda-bld/typing_extensions_1647553014482/work Threadpoolctl file:///Users/ktietz/demo/mc3/conda-bld/threadpoolctl_1629802263681/work This new 11.2 release also delivers programming model updates to CUDA Graphs and Cooperative Groups, as. Mkl-random file:///tmp/build/80754af9/mkl_random_1626186066731/work Today, CUDA 11.2 is introducing improved user experience and application performance through a combination of driver/toolkit compatibility enhancements, new memory suballocator feature, and compiler enhancements including an LLVM upgrade. I have been successfull in installing CUDA 11.8, but this is the wrong version for TF2. Joblib file:///tmp/build/80754af9/joblib_1635411271373/work the Windows Subsystem for Linux and I specifically need CUDA 11.2 and cuDNN 8.1.

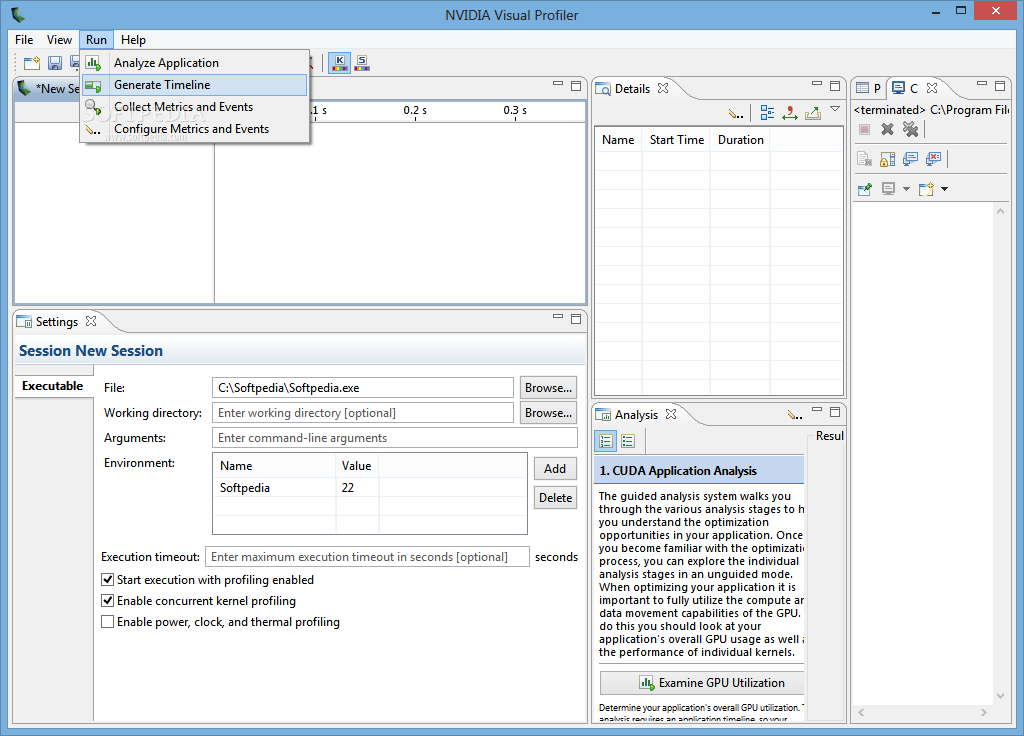

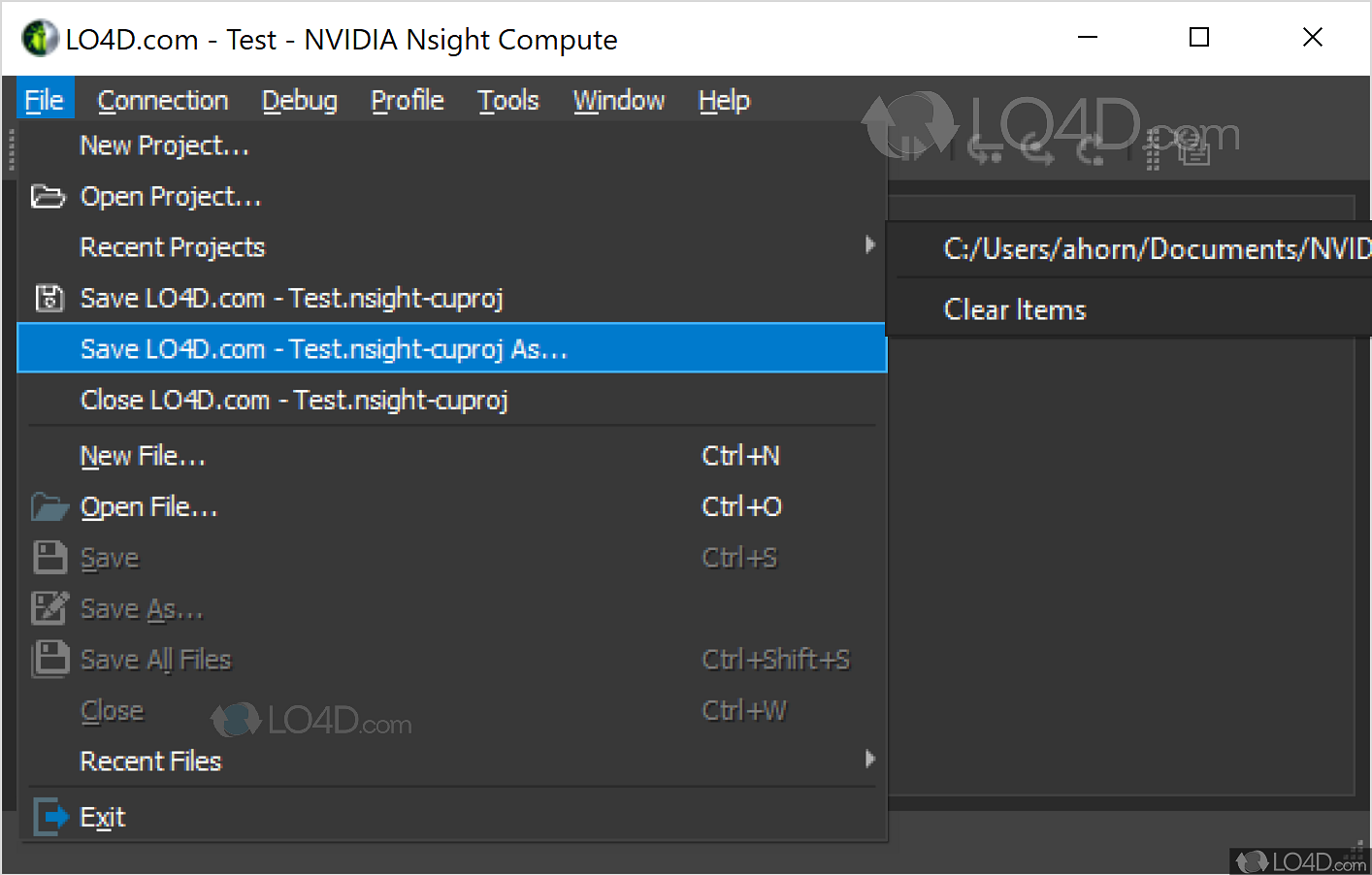

Imageio-ffmpeg file:///home/conda/feedstock_root/build_artifacts/imageio-ffmpeg_1649960641006/work Sys_ram: Total: 14992MB, Available: 14411MB, Used: 280MB, Free: 11950MB Logitech G922 Language Graphics accelerator NVIDIA CUDA Toolkit 11.2 The fourth and final step was preparing the YOLO model output for post-processing. NVIDIA cuda toolkit (mind the space) for the times when there is a version lag. Py_command: /home/(((redacted_username)))/faceswap/faceswap.py The question is about the version lag of Pytorch cudatoolkit vs. The CUDA drivers compatibility package only supports. However, if you are running on Data Center GPUs (formerly Tesla), for example, T4, you may use NVIDIA driver release 418.40 (or later R418), 440.33 (or later R440), 450.51 (or later R450). Read our new technical developer blog, “ CUDA 11 Features Revealed” for a deeper dive on the breadth of software advances, and more specific details about support for the new NVIDIA Ampere GPU architecture.Code: Select all = System Information = CUDA 11 introduces support for the new NVIDIA A100 based on the NVIDIA Ampere architecture, Arm server processors, performance-optimized libraries, and new developer tools and improvements for A100. CUDA is the most powerful software development platform for building GPU-accelerated applications, providing all the components needed to develop applications targeting every GPU platform.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed